by Vaibhavi M.

8 minutes

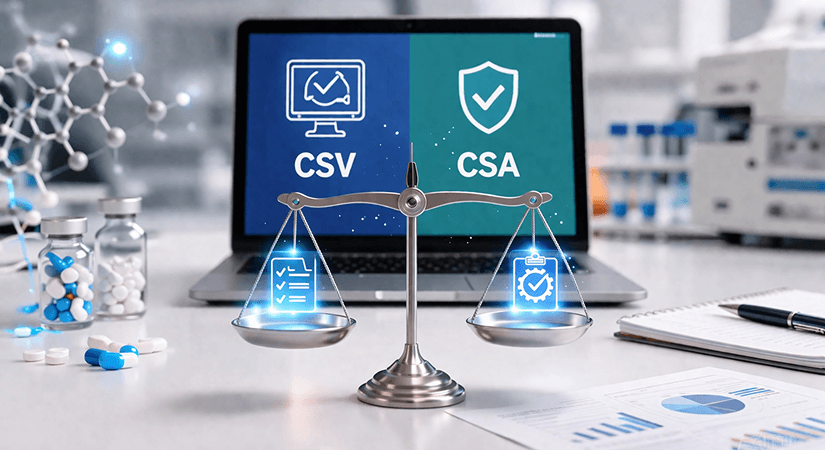

Computer System Validator (CSV) vs. Computer System Assurance (CSA): What Are the Main Differences?

Guide to Computer System Validation (CSV) vs Computer Software Assurance (CSA) in pharma with key differences and risk-based approach.

If you work in the pharmaceutical or medical device industry, you have probably heard the terms CSV and CSA being thrown around quite a bit lately. And if you have ever tried to look them up, you may have found yourself staring at walls of regulatory language that felt more confusing than helpful.

So let's break this down in plain, simple terms, what these two approaches actually mean, how they differ, and why it matters for your organisation.

What Is Computer System Validation (CSV)?

Computer System Validation, or CSV, has been the standard approach in regulated industries for decades. At its core, CSV is a documented process that ensures a computer system does exactly what it was designed to do, consistently and repeatedly. The goal is to guarantee data integrity, product quality, and compliance with applicable GxP regulations.

The FDA first published its CSV guideline back in 2003, alongside the well-known 21 CFR Part 11 regulation, which governs electronic records and electronic signatures. Since then, CSV has been the go-to framework for pharmaceutical companies, clinical research organisations, and medical device manufacturers when implementing or upgrading a computerised system.

The Four Questions That Drive the CSV Process

In a CSV approach, the validation process follows a structured life cycle. Before any testing begins, a team needs to answer four fundamental questions:

- What will be validated?

- What are the acceptance criteria?

- How will it be validated?

- And who will do the validating?

These questions shape the entire documentation strategy and help define the scope of the work.

The Three Core Specification Documents

The validation itself is built around three key specification documents. The User Requirements Specification (URS) captures what the system must do from the user's perspective; for example, a lab system might need to track analyst training records, automatically assign tasks, and comply with 21 CFR Part 11.

The Functional Specification (FS) describes how the system will meet those user needs, including the interface design and data processing. The Design Specification (DS) goes deeper into the technical architecture, database design, security measures, network requirements, and interface configurations.

How Testing Works: IQ, OQ, and PQ

Once these documents are in place, testing follows the classic V-model (also called the linear approach). Installation Qualification (IQ) verifies that the system has been installed correctly in accordance with the design specifications. Operational Qualification (OQ) checks that the system functions as described in the functional specification. And Performance Qualification (PQ) confirms the system performs in line with user requirements under real-world conditions. In short, every specification has a corresponding test, resulting in a significant volume of documentation.

The Strengths and the Criticisms of CSV

This documentation-heavy nature of CSV has always been both its strength and its biggest criticism. Yes, it is thorough. Yes, it builds a solid paper trail. But it is also time-consuming, expensive, and sometimes leads organisations to create mountains of paperwork that add little actual value to patient safety or product quality.

What Is Computer Software Assurance (CSA)?

This is where Computer Software Assurance (CSA) comes into play. The FDA introduced CSA through a draft guidance document titled "Computer Software Assurance for Production and Quality System Software," published on September 13, 2022. The driving idea behind CSA is simple: instead of validating everything with the same level of rigour regardless of risk, focus your efforts where they matter the most.

CSA is a risk-based approach that aims to establish and maintain confidence that software is fit for its intended use. Rather than generating exhaustive documentation for every system feature, CSA asks teams to think critically about what could go wrong and the severity of the consequences. The higher the risk to patient safety or product quality, the more rigorous the testing needs to be. For lower-risk software features, less documentation and simpler testing methods are perfectly acceptable.

Step 1: Identifying the Intended Use

The CSA process begins with identifying the intended use of the software. The FDA distinguishes between software used directly as part of production or a quality system, such as tools that automate manufacturing processes or collect quality data, and software used to support those systems, such as testing tools or general record-keeping applications. Software used for general business functions, such as email or accounting, falls entirely outside the scope.

Step 2: Assessing the Risk

Once the intended use is clear, the next step is to assess the risk. A software feature is considered high risk when its failure could lead to a quality issue that compromises patient safety.

For instance, software that maintains critical process parameters like temperature or pressure, or software that analyses product acceptability without additional human review, would typically fall into the high-risk category. On the other hand, software used for CAPA routing, complaint tracking, or change control management is generally considered not high risk.

Step 3: Determining the Right Type of Testing

The level of risk then determines the type of testing required. High-risk features call for robust scripted testing, structured, documented, and traceable to requirements. Medium-risk features may use limited scripted testing, which is a hybrid of structured and flexible methods.

For low-risk features, unscripted testing methods such as exploratory testing, ad hoc testing or error-guessing are perfectly adequate. The tester uses their knowledge and judgment to explore the system without the need for formal test scripts.

Step 4: Maintaining the Right Records

Records still need to be maintained under CSA, but they are leaner and more purposeful. A CSA record should capture the intended use of the software feature, the determined risk level, a description of the testing conducted, any issues found and how they were resolved, a conclusion on whether the results are acceptable, the date of testing, and the name of the person who performed it.

CSV vs. CSA: The Key Differences

Both CSV and CSA exist to ensure that computerised systems in regulated industries are reliable, compliant, and fit for use. Both involve planning, testing, documentation, and reporting. And both apply to systems that impact patient safety, product quality, and data integrity. But the similarities begin to fade when you examine the philosophies behind each approach.

CSV: Compliance-Driven by Nature

CSV is fundamentally compliance-driven. It validates that a system meets a predefined set of specifications, and it does so with equal thoroughness across all system features. The documentation burden is high, and the process is largely the same regardless of whether you are testing a mission-critical data acquisition system or a simple document management feature.

CSA: Assurance-Driven by Design

CSA, by contrast, is assurance-driven. It asks teams to think critically about risk and apply testing effort in proportion to what is actually at stake. It replaces the one-size-fits-all mentality of CSV with a targeted, flexible, and more intelligent approach. Less time is spent writing test scripts for low-risk features, and more time is spent on meaningful assurance for areas that could truly harm patients or compromise product quality.

What CSA Means in Practice

From a practical standpoint, CSA reduces cycle times for test creation, review, and approval. It cuts down the number of generated documents. It allows organisations to take credit for supplier-provided testing evidence rather than repeating the same validation work. And it supports companies that are moving toward automation and agile development practices, where rigid, linear validation models often create bottlenecks.

Does CSA Replace CSV?

One important distinction worth noting: CSA does not eliminate the need for CSV. Rather, it redefines how validation is carried out. Some highly regulated systems may still benefit from the comprehensive structure that CSV provides. The decision of which approach to use, or whether to blend both, depends on the nature of the software, the level of risk involved, and the organisation's regulatory obligations.

Making the Transition from CSV to CSA

For organisations that have been running on CSV for years, moving toward CSA can feel like a big cultural shift. And in many ways, it is. The transition requires teams to stop thinking of validation as a documentation exercise and start thinking of it as a risk-management exercise.

Practically speaking, the transition involves evaluating current validation practices to identify areas where documentation can be reduced without compromising compliance. It means training quality and IT teams on CSA principles and risk-based thinking.

It means learning to leverage vendor documentation and supplier qualification data more effectively, rather than duplicating validation efforts in-house. And it means embracing tools and technologies that can automate parts of the assurance process.

The shift does not have to happen overnight. Many organisations choose to apply CSA principles to new system implementations while maintaining their existing CSV approach for legacy systems. This phased strategy allows teams to build experience and confidence with the new approach without disrupting ongoing operations.

Why This Matters for Your Organisation

The move from CSV to CSA reflects a broader shift in the FDA's approach to quality. Rather than equating compliance with paperwork, the FDA is pushing toward a culture where quality is embedded in processes, decisions are grounded in risk-based thinking, and validation efforts are focused on where they create real value.

For pharmaceutical and medical device companies, this is ultimately a good thing. It means less time buried in test scripts and more time focused on building systems that are genuinely safe, reliable, and effective. It means faster implementation timelines, more efficient resource use and validation practices that actually scale with the complexity of modern software.

Whether you are still fully committed to CSV, actively exploring CSA, or somewhere in between, understanding the difference between these two approaches is the first step toward making smarter decisions about how your organisation manages and validates computerised systems.

FAQs

Q1. What is the main difference between CSV and CSA in pharma?

CSV (Computer System Validation) is a documentation-heavy, compliance-driven approach that validates all system features with equal rigor. CSA (Computer Software Assurance) is a risk-based approach introduced by the FDA that focuses testing efforts on areas with the highest impact on patient safety and product quality, reducing unnecessary documentation for low-risk features.

Q2. Is CSA replacing CSV in the pharmaceutical industry?

CSA is not replacing CSV entirely. Instead, it redefines how validation is performed. The FDA introduced CSA to make the validation process more efficient and risk-focused. Organizations may still use CSV for highly regulated or complex systems while applying CSA principles to other software where a lighter-touch approach is appropriate.

Q3. What regulations govern CSV and CSA in life sciences?

CSV is governed primarily by FDA 21 CFR Part 11 and GxP guidelines, along with GAMP 5, which categorises computerised systems by risk. CSA is guided by the FDA's 2022 draft guidance on "Computer Software Assurance for Production and Quality System Software," which outlines a risk-based framework for assurance activities.

Q4. What types of testing are used in CSA?

CSA uses two main types of testing based on risk level. For high-risk software features, robust scripted testing with formal documentation is required. For low- to medium-risk features, unscripted testing methods such as exploratory testing, ad hoc testing and error-guessing are acceptable, reducing the need for formal test scripts and extensive documentation.

Q5. How does a pharma company transition from CSV to CSA?

A transition from CSV to CSA involves applying risk-based thinking to existing validation processes, reducing scripted testing for low-risk system features, leveraging supplier-provided qualification evidence, and training quality and IT teams on CSA principles. Most organizations adopt a phased approach, starting with new system implementations before revisiting legacy systems.

20260410122823.svg)