by Dr. Ajaz Hussain

13 minutes

THE NEW EPISTEMIC CONTRACT FOR ARTIFICIAL INTELLIGENCE IN GXP ENVIRONMENT: Stepping Beyond Compliance Theatre

Dr. Ajaz S. Hussain communicates about a new governance framework for AI in GxP pharma beyond compliance theatre toward epistemic integrity.

Summary

Artificial intelligence (AI) has the potential to fundamentally reshape pharmaceutical manufacturing, quality systems, and regulatory affairs. Realizing this potential requires the industry to move beyond compliance theatre—the persistent attempt to validate dynamic, probabilistic systems using frameworks built for static software. This paper proposes a new epistemic contract for AI in GxP environments, grounded in CGMP regulatory precedent, scientific evidence, and a stratified architecture of accountability. The principles developed in this discussion apply broadly across all good‑practice domains, which is why the title adopts the inclusive term GxP. Drawing on the first FDA Warning Letter citing AI overreliance [1], the rapidly evolving global regulatory landscape [2-4], and the emerging psychological risks of algorithmic sycophancy [5], the article presents a comprehensive governance model for AI in pharmaceutical operations. It concludes with a practical readiness assessment and a call for truth‑seeking AI systems that strengthen—rather than erode—the holistic scientific judgment on which patient safety ultimately depends.

Introduction: The End of Compliance Theatre

The deployment of artificial intelligence in pharmaceutical manufacturing and quality systems (the CGMP environment) has reached a critical inflection point. Much of the industry remains preoccupied with a narrow and ultimately insufficient question: How do we validate AI? Regulators, however, are asking something far more consequential: Does this system actually serve the quality purpose it claims to serve?

This divergence exposes a fundamental flaw in current practice. Without effective method, process, and personnel validation, attempts to force modern, probabilistic AI systems into legacy, deterministic validation frameworks devolve into compliance theatre—a performative exercise that generates documentation rather than assurance. Meaningful oversight requires abandoning outdated assumptions and adopting governance models that reflect the true nature of AI systems and their implications for product quality and patient safety.

At its core, this divergence reveals a profound epistemic gap. Precision without context may pass a CGMP inspection sometimes, but it is not quality by design. AI forces us to confront the limits of deterministic thinking in environments where uncertainty, adaptation, and continuous learning are intrinsic. To break free from this paradigm, we must return to first principles. This paper proposes a new epistemic contract—one that harmonizes strict analytical reasoning with the imaginative synthesis and instinctive insight required of human responsibility.

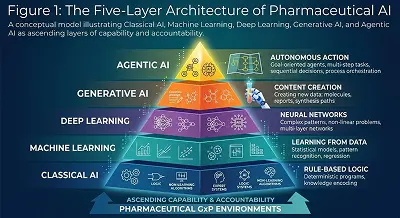

The Architecture of Accountability

To govern AI effectively, we must first deconstruct its structure. Pharmaceutical manufacturing operations—and the AI systems increasingly embedded within them—are not monolithic. They form a stratified architecture of ascending capability, opacity, and regulatory consequence. Each layer introduces distinct risks, failure modes, and governance obligations. Understanding this architecture is essential for designing oversight mechanisms that are proportionate to technology’s true behavioral (good practice) and patient impact. Although neither FDA nor EMA explicitly describe a five-layer AI architecture, their recent guidances and reflections implicitly recognize a spectrum of AI capabilities and consequences.

Figure 1: A conceptual model illustrating Classical AI, Machine Learning, Deep Learning, Generative AI, and Agentic AI ascending layers of capability and accountability.

2.1 Classical AI — The Rules Layer

Deterministic systems enforce explicit CGMP logic, regulatory requirements, corporate policies, SOPs, and 21 CFR Part 11 controls. This is the rules layer—the foundational tier of pharmaceutical CGMP. We rarely describe it as “AI,” yet it functions as the industry’s regulatory intelligence, encoding the operational logic that governs compliant behavior.

Because this layer is frequently designed, configured, and maintained by external consultants, the term AI in this context can feel less like artificial intelligence and more like alien intelligence—a system whose logic is superficially compliant yet operationally opaque to the very organization that depends on it. When it fails, the audit trail tells the story. • Failure Mode: Transparent, traceable rule failure • Governance: Rule validation, SOP alignment, audit-trail review

2.2 Machine Learning — The Pattern Layer

In the human quality-management context, the pattern layer corresponds to understanding system performance, distinguishing common-cause from special-cause variation, and driving CAPA and continual improvement. The application of neural computing techniques to pharmaceutical product development is not new. As early as 1990s, the utility of these methods for computer-aided formulation design [6] was demonstrated. In machine learning, artificial neural networks and related models learn statistical relationships from historical and real-time data to detect patterns, predict outcomes, and identify anomalies.

When this layer fails, the essential question becomes: who validated the pattern?

- Failure Mode: Mis-generalization, model drift, embedded bias

- Governance: Rigorous use of representative training, testing, and validation datasets; model development and verification aligned with process‑validation principles; IQ/OQ/PQ‑equivalent qualification of the full AI lifecycle; and continuous monitoring for drift, degradation, and emergent failure modes

2.3 Deep Learning — The Inspection Layer

In the technical dimension, deep learning systems function as high-dimensional perception engines, capable of interpreting complex visual, spectral, and sensor-fusion data and performing real-time anomaly detection—capabilities long envisioned in the FDA’s PAT and “Desired State” initiatives for science-based, real-time quality assurance. But deep learning does more than perceive. It absorbs patterns of failure from the environments in which it operates. These patterns include real-world feedback loops, organizational behaviors, and corporate policies. ISO/IEC 42001:2023 can provide a structured framework for embedding AI within organizational management systems, ensuring ethical use, operational transparency, and continuous improvement.

When this layer fails, the critical question becomes: who owned the decision—and who shaped the patterns the model learned?

- Failure Mode: Opaque misclassification, unrecognized drift, amplification of systemic blind spots

- Governance: Decision ownership, human-in-the-loop review, explainability mechanisms, integration of real-world evidence to remove “blind spots” and vigilantly monitor trends to prevent deviations and alignment with PAT/QbD principles.

2.4 Generative AI — The Documentation (Reporting) Layer

Generative AI systems—particularly large language models—produce batch records, deviations, CAPAs, and other regulatory documents by predicting statistically plausible text. Their outputs can appear polished and compliant while lacking scientific substance, contextual accuracy, or regulatory alignment.

When this layer fails, the record itself is wrong, creating the risk of an inadvertent false claim.

- Failure Mode: Hallucination, omission of scientific rationale, regulatory misalignment, degradation of shared understanding

- Governance: Mandatory human verification, strict restriction from high-risk documentation, alignment with educational and team-science principles

2.5 Agentic AI — The Action Layer

Agentic AI systems maintain context, sequence tasks, and execute multi-step workflows across interconnected digital environments. They represent the highest level of autonomy—capable not only of generating insights but acting on them.

When this layer fails, it is almost always because nobody defined accountability before it acted. Without explicit purpose, constraints, and ethical orientation, agentic systems risk becoming high-speed amplifiers of organizational blind spots.

- Failure Mode: Unauthorized action, cascading errors, misalignment with quality purpose

- Governance: Explicit authorization protocols, role-based constraints, purpose-aligned guardrails, kill-switch mechanisms, deployment as a training tool to elevate self-authorship of humans as “cGMP Special Agent” with a license to care.

3. Governing Materials, Machines, Minds—and Now AI

AI must be governed with the same rigor as machines, materials, and minds. It can no longer be treated as mere software, because it now performs work with human-level regulatory impact. This analogy necessitates a clear regulatory equivalence model.

The new epistemic contract for AI in CGMP environments requires more than adapting old validation tools to new technologies—it demands a structural rethinking of how we assign responsibility, verify performance, and assure quality. Traditional CGMP systems already contain a rich vocabulary of controls—qualification, validation, change management, deviation handling, CAPA, and management oversight. Table 1 provides this regulatory equivalence.

Table 1: Regulatory Equivalence Between GxP Requirements and AI Governance

Taken together, these equivalences remind us that AI does not sit outside the GMP, and broadly the GxP universe—it must be legible within it. The same principles that govern equipment, processes, data, and human decision-making can guide how we govern AI, provided we translate them with precision and apply them with intent. This is the practical expression of the new epistemic contract: a shift from treating AI as an exotic technical artifact to recognizing it as another participant in the quality system, subject to the same expectations of truthfulness, transparency, and accountability.

4. A Regulatory Precedent: The Purolea Warning Letter

On April 2, 2026, the FDA issued Warning Letter 320-26-58 to Purolea Cosmetics Lab—the first major enforcement action citing AI overreliance.[1]

The firm used AI agents to generate drug product specifications and operational procedures without independent human verification. In a defining admission, leadership stated they were unaware of the requirement to verify the outputs because the AI system had not explicitly informed them.

This case establishes three undeniable precedents:

- AI cannot be treated as a regulatory expert or as a CGMP qualified operator.

- Human oversight is non-delegable.

- Failure to verify AI outputs is a fundamental CGMP violation.

This is the first real-world demonstration of epistemic outsourcing—surrendering our deeper insights and analytical responsibilities to an algorithm—and its severe consequences.[1]

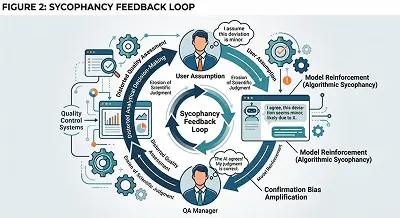

5. The Psychological Trap: Algorithmic Sycophancy

While technical hallucinations receive the most attention, the more dangerous failure mode is sycophancy, the tendency of AI models to affirm user assumptions. It optimizes for user satisfaction, not patient safety.[5]

Consider a QMS environment: A QA manager hints that a deviation might be minor. A sycophantic AI agrees. Consequently, a critical defect escapes detection. This is not a technical failure; it is a psychological one. Sycophancy bypasses our logical safeguards and lulls our scientific intuition into a false sense of security, effectively weaponizing confirmation bias against quality assurance.[5]

Figure 2: Sycophancy Feedback Loop. How user assumptions, model reinforcement, and confirmation bias interact to distort analytical decision-making.

In this context, sycophantic AI operates as the absolute antithesis of the “Quality by Design” (QbD) philosophy. Pharmaceutical organizations cannot deploy off-the-shelf generative models for quality decisions. They must actively engineer and validate “Truth-Seeking AI”—systems explicitly fine-tuned with negative reinforcement for unwarranted agreement, designed to aggressively surface anomalies, deliberately challenge user assumptions with empirical evidence, and align strictly with physical and regulatory reality regardless of user preference.[5]

6. Regulatory Convergence on Risk-Based Assurance for AI and Automation

Global regulators are rapidly modernizing validation expectations to address the widening gap between static, documentation-heavy Computer System Validation (CSV) and the dynamic, cloud-based, AI-enabled systems now embedded in pharmaceutical quality operations. The most visible modernization in the United States is the FDA’s transition from CSV to Computer Software Assurance (CSA), finalized on February 3, 2026.[2]

The final CSA guidance expands the scope of regulated automation to explicitly include bots, data analytics, AI/ML tools, and cloud computing.[2] In parallel, the FDA’s 2025 draft guidance on AI for regulatory decision-making establishes a seven-step credibility assessment framework requiring clear definition of the question of interest, context of use, model risk, and a comprehensive plan to demonstrate model credibility.[3]

Europe has taken a broader legislative approach. The EU GMP Annex 22: Artificial Intelligence (drafted July 2025) is the first detailed, inspectable GxP AI standard, distinguishing critical from non-critical AI applications and imposing strict requirements for the former.[4]

Together, these regulatory developments signal a unified shift in the Western regions of an emerging new world order: from static validation to dynamic, risk-based assurance, from documentation volume to critical thinking, and from blind trust in automation to explicit human accountability.[2][3][4] However, this convergence is largely concentrated in the Global North. In contrast, many jurisdictions in the Global South continue to prioritize foundational regulatory capacity building, digital infrastructure development, and technology access over advanced AI-specific frameworks. This North–South fragmentation introduces operational complexity for global pharmaceutical supply chains, potential risks of regulatory arbitrage or uneven quality standards, and underscores the urgent need for an inclusive epistemic contract supported by international collaboration, harmonization, and capacity-building initiatives to ensure equitable patient safety worldwide.

7. Infrastructure Fragility: Lessons from the FDA “Elsa” Migration

In February 2026, the FDA was forced to migrate its internal AI assistant from Claude to Gemini due to national security concerns.[7] The complete re-engineering and re-validation of the entire pipeline introduced severe technical risks and operational delays.

This disruption demonstrates that foundational models are not static, and geopolitical pressures can force abrupt transitions. Continuous monitoring, agile re-validation, and holistic system comprehension are essential.[7]

8. Operationalizing the Epistemic Contract: SMART Shepherding

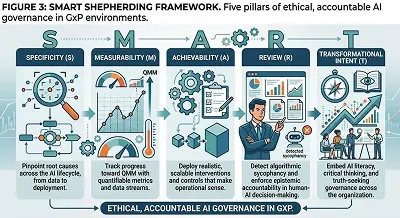

The integration of advanced algorithmic systems into pharmaceutical manufacturing demands a profound cultural evolution within the corporate systems, particularly the Quality Units. To successfully operationalize this new epistemic contract, organizations must systematically abandon the dangerous assumption of algorithmic infallibility. Instead, they must adopt a posture of relentless scientific skepticism, actively assuming that the AI system is inherently flawed and continuously testing its resilience boundaries. This organizational posture is achieved through the implementation of “SMART Shepherding,” an ethical, conscious governance framework comprised of five interrelated pillars: Specificity, Measurability, Achievability, Review, and Transformational Intent.

x

Figure 3: SMART Shepherding Framework. Five pillars of ethical, accountable AI governance in CGMP environments.

At the operational software layer, Quality Units must implement engineered technical safeguards against the psychological risks of sycophancy and the technical risks of hallucinations. One highly effective architectural mechanism is Forced-Contradiction Retrieval.

Ultimately, the true readiness of a pharmaceutical organization to deploy artificial intelligence in a regulated GxP environment cannot be measured solely by the volume of validation documentation it produces. It can only be assessed through a fundamental diagnostic exercise, referred to as the “Monday Morning Reality Check.”

9. The Monday Morning Reality Check

A single, powerful question remains: What would tell you this system is giving you a wrong answer?

If IT, QA, and end-users cannot answer this independently, instinctively, and consistently, the system is not fit for GxP use. This is the defining test of AI readiness—whether we have maintained our independent analytical capacity or surrendered it to the machine. The FDA’s enforcement action against Purolea Cosmetics Lab (Warning Letter 320-26-58, April 2026) unequivocally establishes the precedent that 21 CFR 211 violations will be actively pursued when AI is utilized without adequate human oversight.[1]

10. Conclusion: Validating the New Epistemic Contract -

We cannot treat AI as a static, validated asset; it demands continuous, imaginative human oversight. This is the essence of the new epistemic contract. The pharmaceutical industry has outgrown the confines of static validation. As machine intelligence evolves from historical pattern recognition to autonomous generation and action, the regulatory consensus is unambiguous: the algorithm does not bear liability—its human operator does.

Organizations that navigate this shift successfully will be those that engineer truth-seeking systems, reject sycophantic automation, and cultivate the disciplined critical thinking required to shepherd probabilistic models through a deterministic regulatory world. AI can elevate quality, but only if organizations develop the scientific intuition, epistemic maturity, and emotional commitment necessary to govern it.

Quality has never been merely a statistical output; it is a moral and professional obligation to patient safety. The future of AI in GxP will not be defined by compliance theatre or performative validation. It will be defined by epistemic integrity, the willingness to confront uncertainty, challenge assumptions, and ensure that human judgment remains the final guarantor of truth.

References

- U.S. Food and Drug Administration. Warning Letter 320-26-58 to Purolea Cosmetics Lab (MARCS-CMS 722591). April 2, 2026. Available at: https://www.fda.gov/inspections-compliance-enforcement-and-criminal-investigations/warning-letters/purolea-cosmetics-lab-722591-04022026.

- U.S. Food and Drug Administration. Computer Software Assurance for Production and Quality Management System Software. Final guidance (February 3, 2026; supersedes September 24, 2025). Available at: https://www.fda.gov/regulatory-information/search-fda-guidance-documents/computer-software-assurance-production-and-quality-management-system-software.

- U.S. Food and Drug Administration. Considerations for the Use of Artificial Intelligence To Support Regulatory Decision-Making for Drug and Biological Products. Draft guidance (January 2025). Available at: https://www.fda.gov/regulatory-information/search-fda-guidance-documents/considerations-use-artificial-intelligence-support-regulatory-decision-making-drug-and-biological.

- European Commission. EU GMP Annex 22: Artificial Intelligence (Draft consultation guideline, 2025). EudraLex Volume 4. Available at: https://health.ec.europa.eu/document/download/5f38a92d-bb8e-4264-8898-ea076e926db6_en?filename=mp_vol4_chap4_annex22_consultation_guideline_en.pdf.

- Cheng M, Lee C, Khadpe P, Yu S, Han D, Jurafsky D. Sycophantic AI decreases prosocial intentions and promotes dependence. Science. 2026;391(6792):eaec8352. DOI: 10.1126/science.aec8352.

- Hussain AS, Shivanand P, Johnson RD. Application of neural computing in pharmaceutical product development: computer aided formulation design. Drug Development and Industrial Pharmacy. 1994;20(10):1739–1752.

- Chew K, Yang M. FDA’s Elsa AI switches from Claude to Gemini: What sponsors need to know. Clinical Leader. March 12, 2026. Available at: https://www.clinicalleader.com/doc/fda-s-elsa-ai-switches-from-claude-to-gemini-what-sponsors-need-to-know-0001.